1. Compute Architecture: Where Work Actually Runs

At a high level, both processor families are heterogeneous.

But the way they divide work across cores leads to very different system

behavior.

The i.MX 93 platform is relatively straightforward. You have

Cortex-A55 cores handling your main application workload and a Cortex-M33

sitting alongside them for real-time tasks. That separation is clean. You can

run Linux on the A cores and offload time-critical work, like sensor polling,

control loops, low-power wake functions, to the M33 without a lot of

coordination overhead.

The AM62 is more layered. You’re working with Cortex-A53

cores for Linux, a Cortex-M4F for microcontroller tasks, a Cortex-R5F for

device management, and a PRU subsystem designed for deterministic, cycle-level

I/O. That gives you significantly more flexibility, especially in industrial

environments, but it also means you’re managing multiple execution domains.

Each of those domains introduces its own firmware, toolchain, and integration

points.

This is where the difference becomes practical. If your

application requires tight control over timing, industrial protocols,

synchronized I/O, or deterministic communication, the AM62’s PRU is extremely

useful. It can do things a general-purpose MCU core simply can’t. But if your

system is more application-driven, UI, connectivity, sensor processing, the

simpler partitioning on i.MX 93 tends to reduce integration effort.

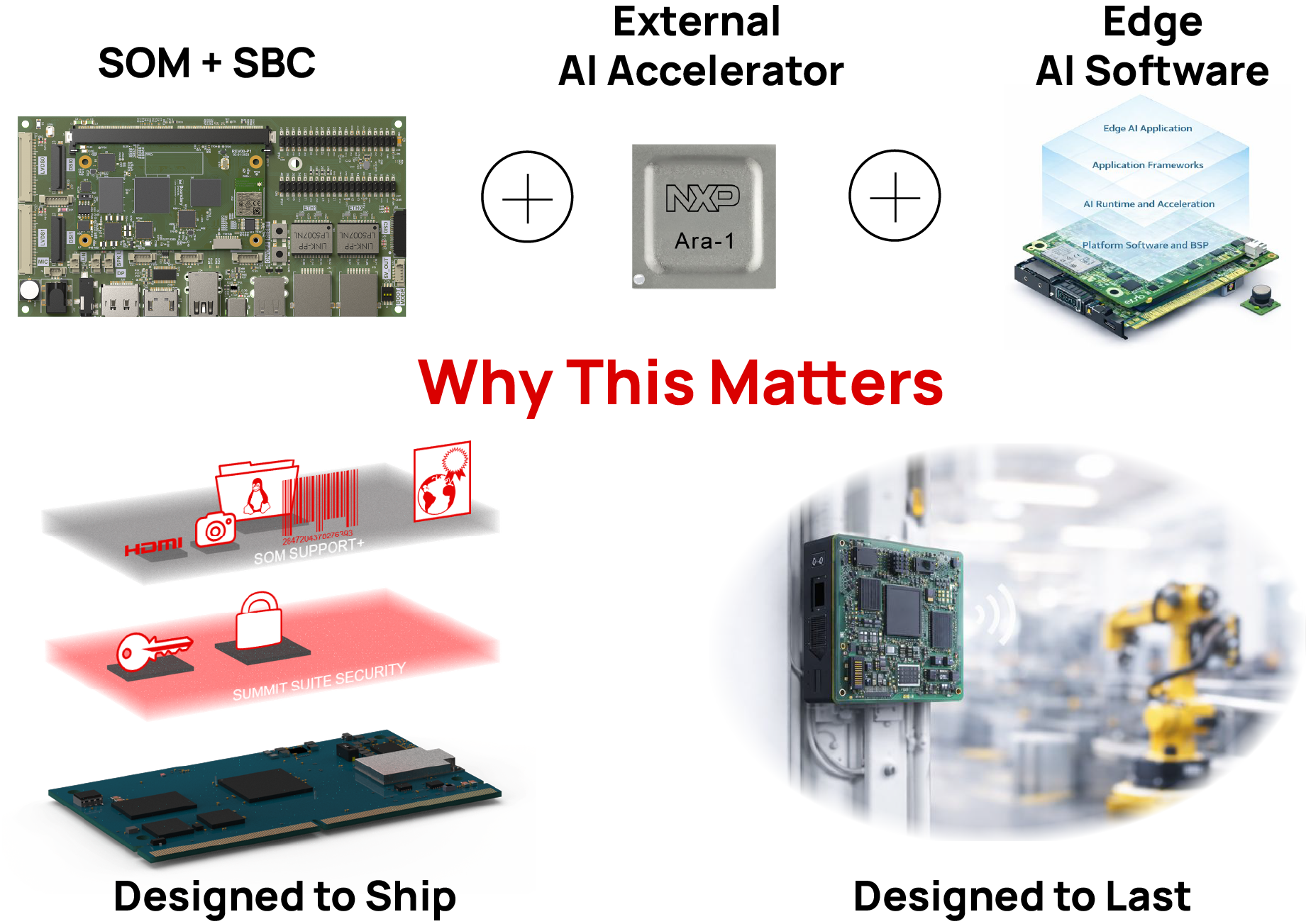

The other major factor is AI. The i.MX 93 includes an

integrated Ethos-U65 microNPU, capable of handling edge inference workloads

without pulling everything onto the CPU. That changes how you design the

system. Tasks like object detection or keyword recognition can run locally,

efficiently, and without adding external hardware.

With AM62, there’s no integrated NPU. Any AI workload either

runs on the CPU or requires an external accelerator. That doesn’t make it

unusable, but it does push complexity into other parts of the design as power,

latency, and BOM all get affected.